Claude Opus 4.7 vs GPT-5: Which AI Model Should Your Business Use in 2026?

Introduction

April 2026 just delivered the most competitive week in AI history. On April 16, Anthropic released Claude Opus 4.7 — reclaiming the coding crown with 87.6% on SWE-Bench Verified and 64.3% on SWE-Bench Pro. Exactly seven days later, on April 23, OpenAI fired back with GPT-5.5 (codename “Spud”) — the first fully retrained base model since GPT-4.5, with state-of-the-art agentic performance and natively omnimodal architecture.

If you’re running an automation pipeline, building an AI agent, or evaluating which model to commit to for production workloads, the Claude Opus 4.7 vs GPT-5 decision matters financially. These are flagship-tier models with significantly different cost structures, different strengths, and dramatically different behaviors in real workflows. Picking wrong means either burning cash on the wrong model or watching your competitors ship better products with the right one.

Here’s the honest breakdown — based on official benchmark data, vendor pricing, and the first wave of real-world testing reports from the past week.

The Fast Answer: They Win on Different Axes

Before the deep dive, here’s the practical takeaway most teams need:

Choose Claude Opus 4.7 if your workload is coding-heavy — multi-file refactoring, code review, complex software engineering, or vision-based document processing. Opus 4.7 leads decisively on coding-resolution benchmarks.

Choose GPT-5.5 if your workload is agentic — long-running multi-tool orchestration, browser automation, computer use, terminal-based workflows, or web research at scale. GPT-5.5 leads on planning-and-execution benchmarks.

The smartest enterprises are doing both. Multi-model routing — sending coding tasks to Opus 4.7 and agentic tasks to GPT-5.5 — is the recommended production approach in 2026.

Now the details.

Benchmark Showdown: Where Each Model Wins

On the 10 benchmarks both providers report, Opus 4.7 leads on 6, GPT-5.5 leads on 4. The leads cluster by category, not by overall quality.

| Benchmark | Claude Opus 4.7 | GPT-5.5 | Winner |

|---|---|---|---|

| SWE-Bench Verified (real GitHub issues) | 87.6% | ~80% | 🏆 Opus 4.7 |

| SWE-Bench Pro (harder multi-language) | 64.3% | 58.6% | 🏆 Opus 4.7 |

| GPQA Diamond (graduate-level reasoning) | Lead | Trails | 🏆 Opus 4.7 |

| HLE (Humanity’s Last Exam, with tools) | Lead | Trails | 🏆 Opus 4.7 |

| MCP-Atlas (multi-tool orchestration) | 77.3% | 68.1% | 🏆 Opus 4.7 |

| MMLU Multilingual | 91.5% | 83.2% | 🏆 Opus 4.7 |

| FinanceAgent v1.1 | Lead | Trails | 🏆 Opus 4.7 |

| Terminal-Bench 2.0 (command-line agentic) | 69.4% | 82.7% | 🏆 GPT-5.5 |

| BrowseComp (web research) | Trails | 90.1% | 🏆 GPT-5.5 |

| OSWorld-Verified (computer use) | Trails | Lead | 🏆 GPT-5.5 |

| CyberGym (cybersecurity) | Trails | Lead | 🏆 GPT-5.5 |

| FrontierMath Tier 4 | 22.9% | 35.4% | 🏆 GPT-5.5 |

The pattern is unmistakable:

Opus 4.7 wins on codebase-resolution — SWE-Bench (the gold standard for software engineering), MCP-Atlas (multi-tool coordination), and reasoning-heavy review tests.

GPT-5.5 wins on planning-and-execution — Terminal-Bench, computer use, web research, and long-horizon tool sequencing.

They’re not competing on the same axis.

Pricing: The Real Math Behind Claude Opus 4.7 vs GPT-5

Both models charge $5 per million input tokens on the standard tier. The difference is on output:

| Pricing Component | Claude Opus 4.7 | GPT-5.5 |

|---|---|---|

| Input (per 1M tokens) | $5 | $5 |

| Output (per 1M tokens) | $25 | $30 |

| Long-prompt surcharge | 2× above 200K tokens | None published |

| Context window | 1M tokens input / 128K output | 1M tokens input / 128K output |

| Codex/Coding integration | Native (Claude Code) | Native (Codex, 400K context) |

| Pro/highest-tier variant | xhigh effort level | GPT-5.5 Pro: $30/$180 per 1M |

At face value, Opus 4.7 is 17% cheaper on output tokens. But the real cost picture is more nuanced.

Token efficiency changes the math. GPT-5.5 uses approximately 40% fewer output tokens than GPT-5.4 to complete the same Codex tasks — and 72% fewer output tokens than Claude Opus 4.7 on certain coding workloads. That structural difference means GPT-5.5 can be cheaper per completed task even though its per-token rate is higher.

Opus 4.7 is verbose. It explains, narrates, and documents as it works. That’s sometimes valuable — but in an agentic loop running thousands of times daily, verbosity becomes an expensive habit. Opus 4.7’s reasoning quality is exceptional, but the trade-off is faster context-window consumption and higher per-task cost when running long agentic sessions.

The practical pricing rule: For interactive coding sessions where humans review every output, Opus 4.7’s verbosity helps you understand what the AI did. For automated pipelines running overnight at scale, GPT-5.5’s conciseness saves real money.

Speed and Latency: A Surprising Split

Here’s where the comparison gets interesting:

- Claude Opus 4.7 has a notably faster time-to-first-token (TTFT) — approximately 0.5 seconds in production serving versus GPT-5.5’s roughly 3-second baseline (inherited from the GPT-5.4 architecture). For interactive surfaces — chat, IDE assistants, voice interfaces — this gap is felt instantly.

- GPT-5.5 has marginally higher per-token throughput (~50 tokens/second vs Opus 4.7’s ~42 tps) once generation starts. Combined with its 40% lower token usage per task, the wall-clock completion time for long autonomous runs often favors GPT-5.5 — but for short interactive tasks, Opus 4.7 feels faster.

- The takeaway: for developers asking questions and iterating on code, Opus 4.7 feels snappier. For agents running fully automated overnight pipelines, GPT-5.5’s fewer-tokens-per-task profile closes and often wins the speed race.

Agentic Capabilities: Where Each Model Thrives

This is where the Claude Opus 4.7 vs GPT-5 decision becomes a real strategic call.

Claude Opus 4.7’s Agentic Strengths:

- MCP-Atlas dominance — 77.3% on multi-tool orchestration vs GPT-5.5’s 68.1%. For agentic AI applications coordinating across CRM, email, calendar, and internal databases, Opus 4.7’s reliability is hard to match.

- Task budgets — A new public-beta feature giving developers an advisory token cap across an entire agentic loop. GPT-5.5 has no direct equivalent.

- xhigh effort level — A new reasoning tier that lets developers trade speed for accuracy on the hardest problems.

- Vision capability — High-resolution vision up to 3.75 megapixels for document processing, OCR, and diagram analysis. Critical for legal, finance, and healthcare workflows.

- Sub-agent orchestration — The new /ultrareview command in Claude Code spawns multiple sub-agents that independently explore codebases, surface bugs, and verify findings before reporting back.

GPT-5.5’s Agentic Strengths:

- Terminal-Bench 2.0 lead — 82.7% vs Opus 4.7’s 69.4%, a 13-point gap on a benchmark Anthropic had effectively owned. For command-line workflows, build pipelines, and DevOps automation, GPT-5.5 is now the model to beat.

- Native omnimodality — Text, images, audio, and video processed end-to-end in a single unified system. Most previous “multimodal” offerings were pipelines stitched together. GPT-5.5 is one model handling all modalities natively.

- Codex integration — Tighter sandbox execution loop. The model runs code, sees output, and iterates faster than DIY scaffolds around Opus 4.7.

- Computer use leadership — OSWorld-Verified scores show GPT-5.5 outperforming on browser-based and desktop GUI automation tasks.

Real Business Use Cases: Which Model Fits Which Workflow

- Customer Support Automation – Winner: Mostly tied, slight edge to GPT-5.5. For high-volume support automation handling thousands of conversations daily, GPT-5.5’s token efficiency and lower cost per resolved ticket matter more than reasoning depth. For complex escalations and nuanced customer issues, Opus 4.7’s reasoning quality is worth the higher cost. Teams building advanced AI chatbots often route tier-1 to GPT-5.5 and tier-2 escalations to Opus 4.7.

- Sales & Lead Qualification – Winner: Depends on volume. For high-volume outbound qualification (10,000+ contacts/month), GPT-5.5’s cost efficiency dominates. For high-touch enterprise sales requiring deep prospect research and personalized outreach, Opus 4.7’s reasoning quality justifies the cost. The pattern parallels what we see across AI tools to automate sales pipelines — multi-model routing wins over single-model commitment.

- Code Generation & Software Engineering – Winner: Claude Opus 4.7, decisively. SWE-Bench Verified at 87.6% and SWE-Bench Pro at 64.3% put Opus 4.7 ahead on the benchmarks that matter most for production-grade software engineering. For complex multi-file refactoring, code review, and architectural reasoning, Opus 4.7 is the right call.

- Document Processing & Vision – Winner: Claude Opus 4.7. The 3.75-megapixel vision capability handles complex documents, technical diagrams, and multi-page contracts at a quality level GPT-5.5 doesn’t match. For legal, finance, and healthcare document workflows, Opus 4.7 is the production standard.

- Research & Knowledge Work – Winner: GPT-5.5. BrowseComp at 90.1% (Pro tier) shows GPT-5.5 leading on web research, multi-step information synthesis, and long-form document analysis. For workflows requiring extensive web crawling, citation gathering, or real-time information retrieval, GPT-5.5 is the better fit.

- Computer Use & Browser Automation – Winner: GPT-5.5. Native omnimodality and OSWorld leadership make GPT-5.5 the choice for agents that need to operate browsers, navigate UIs, and interact with desktop applications.

- Enterprise Integration & Multi-Tool Orchestration – Winner: Claude Opus 4.7. MCP-Atlas leadership and the new task budget feature make Opus 4.7 the safer choice for production agents coordinating across multiple enterprise systems — the kind of orchestration powering modern AI agents replacing SaaS tools.

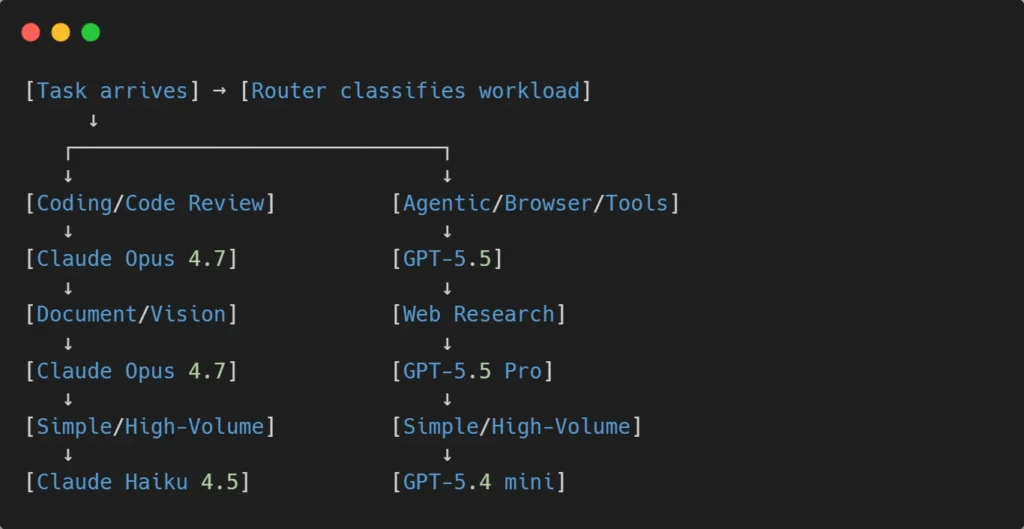

The Multi-Model Strategy: What Smart Teams Are Actually Doing

The most sophisticated AI deployments in 2026 don’t pick one model. They route tasks to the right model based on workload type:

This routing approach optimizes both cost and quality. Frontier models handle frontier tasks. Cheaper models handle high-volume routine work. Total spend often drops 40-60% compared to running everything on a single flagship model.

For teams building this kind of intelligent routing into their stack, API-first development is the architectural foundation that makes multi-model strategies practical — clean abstractions over each provider’s API, model swapping without code rewrites, and the flexibility to adopt newer models (Opus 4.8, GPT-5.6) as they ship.

Limitations You Should Know Before Choosing

Both models have real downsides that vendor announcements gloss over:

Claude Opus 4.7 limitations:

- Verbosity drives up token costs in long agentic loops.

- Long-prompt surcharge (2× above 200K tokens) penalizes massive-context workloads.

- Capped at 150 seats on Team plans — may not fit large enterprise org structures.

- Vision quality regression possible on extreme aspect ratios.

GPT-5.5 limitations:

- Significantly higher latency to first token (~3s vs ~0.5s for Opus 4.7).

- “Fails forward” pattern — confidently completes tasks that turn out subtly wrong.

- 2× price increase from GPT-5.4 ($2.50/$15 → $5/$30).

- GPT-5.5 Pro pricing ($30/$180 per 1M) is significantly more expensive than Opus 4.7.

For both models: Benchmark numbers are self-reported by each vendor at their preferred reasoning tier. Independent third-party benchmarking on shared scaffolds is still emerging. Always run your own evaluation on your actual workload before committing.

The Decision Framework: How to Choose

Choose Claude Opus 4.7 if:

- Coding and code review are your primary use cases.

- You process documents, images, or visual content regularly.

- Your agents coordinate across many internal tools (MCP servers, internal APIs).

- Interactive latency matters (chatbots, IDE assistants, voice agents).

- You need vision capability for document processing.

Choose GPT-5.5 if:

- Browser automation and computer use are central to your workflows.

- You build agents that run long autonomous sessions in terminals.

- Web research, web scraping, or information retrieval is core.

- Token efficiency matters at extreme scale (10M+ tokens/day).

- You’re already deeply integrated with OpenAI’s ecosystem (ChatGPT, Codex).

Use Both with Intelligent Routing if:

- You’re running production AI workloads at scale.

- Different parts of your product have different optimization needs.

- You can invest in routing infrastructure (often pays back in 30-60 days).

- You want resilience against single-vendor outages.

Conclusion: Claude Opus 4.7 vs GPT-5 Has No Single Winner

The Claude Opus 4.7 vs GPT-5 comparison doesn’t pick a winner because both labs optimized for different things. Anthropic doubled down on coding precision, instruction-following, and reasoning depth. OpenAI invested in token efficiency, agentic execution, and multi-modal native architecture.

For your business in 2026, the right answer is rarely “use one model exclusively.” It’s “use the right model for each task.” Opus 4.7 for the work where reasoning quality and coding precision matter most. GPT-5.5 for the work where speed, conciseness, and agentic execution matter most. Cheaper models like Claude Haiku 4.5 or GPT-5.4 mini for high-volume routine tasks.

The teams that build AI products this way — multi-model, intelligently routed, model-agnostic at the architecture layer — will outperform teams that pick a single vendor and try to use it for everything. That’s the strategic insight worth more than any benchmark.

Who we are at Orbilon Technologies

Orbilon Technologies is an AI development agency that builds production AI systems on Claude Opus 4.7, GPT-5.5, and multi-model architectures, including custom AI agents, automation pipelines, and enterprise integrations. With years of engineering experience and a 4.96 average rating across Clutch, GoodFirms, and Google, we help businesses pick the right AI model for each workflow and deploy systems that deliver measurable ROI.

Not sure which AI model fits your business best? Get a free consultation from our AI engineering team.

- Website:orbilontech.com

- Email: support@orbilontech.com

Want to Hire Us?

Are you ready to turn your ideas into a reality? Hire Orbilon Technologies today and start working right away with qualified resources. We will take care of everything from design, development, security, quality assurance, and deployment. We are just a click away.