GPT-5.4 for Enterprise: The Enterprises That Understand This Will Outbuild Everyone in 2026

Introduction

On March 5, 2026 — just two days ago — OpenAI quietly dropped one of the most practically significant model releases in its history. No massive keynote. No countdown. Just a product blog titled “Introducing GPT-5.4” and a model that immediately set new records across every professional benchmark that matters for real business work.

GPT-5.4 for enterprise isn’t a research experiment or a benchmark trophy. It’s a frontier model designed specifically for the workflows that companies actually depend on — coding, document analysis, spreadsheet modeling, multi-step agentic tasks, and computer use. The gap between enterprises already building with it and those still evaluating AI just became a chasm.

Here’s everything you need to understand about what GPT-5.4 is, what’s genuinely new, which industries can move fastest with it, and how to start integrating it today.

What Is GPT-5.4 and What Makes It Different?

| Spec | GPT-5.4 | GPT-5.2 (Previous) |

|---|---|---|

| Context window | 1,050,000 tokens | 128,000 tokens |

| Input price (per 1M tokens) | $2.50 | Higher |

| Output price (per 1M tokens) | $15.00 | Higher |

| SWE-Bench Pro (coding) | 57.7% | 55.6% |

| BrowseComp (research/retrieval) | 82.7% | 65.8% |

| Toolathlon (multi-tool orchestration) | 54.6% | 46.3% |

| OSWorld-Verified (computer use) | 75% | Lower — beats human avg of 72.4% |

| Spreadsheet modeling benchmark | 87.3% | ~79% |

| Availability | ChatGPT, API, Codex | Same |

What's Actually New: The 5 Biggest Changes?

1. Native Computer Use — For the First Time in a General Model

For the first time in a general-purpose OpenAI release, GPT-5.4 has native computer-use capabilities. The model can interact with operating systems, websites, and applications using mouse, keyboard, and visual inputs — enabling it to operate software and carry out complex workflows across multiple applications autonomously.

On OSWorld-Verified, it scores 75% — beating the average human tester score of 72.4%. This means GPT-5.4 for enterprise can literally operate your software stack as an agent. No custom integration required.

2. 1 Million Token Context Window

GPT-5.4 supports 1,050,000 tokens of context — almost 8x the previous 128K limit. That’s roughly 800,000 words processed in a single prompt. You can now feed the model an entire codebase, a full year of financial filings, a complete legal case archive, or an entire knowledge base — and it reasons across all of it without chunking or losing context.

OpenAI notes that this allows agents to “plan, execute, and verify tasks across long horizons“—a direct enabler of serious enterprise automation.

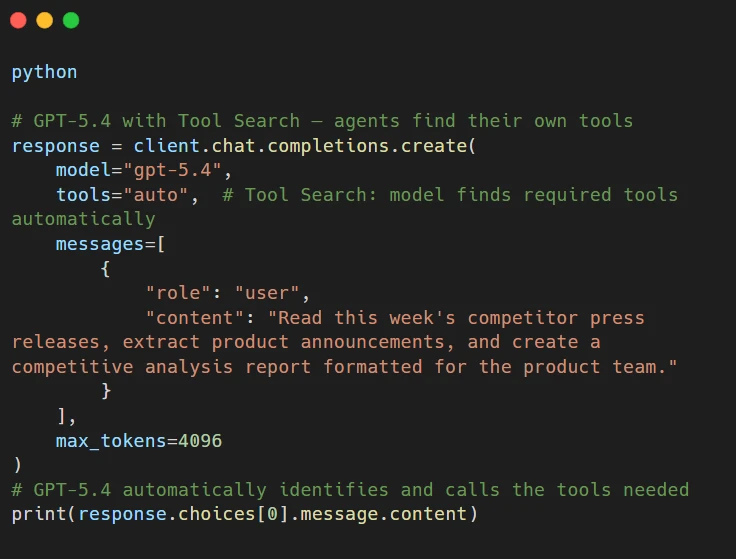

3. Tool Search — Agents Find Their Own Tools

Previously, developers had to prepare a detailed list of every tool an application uses and include it in every API request. GPT-5.4 introduces Tool Search — a new system where the model automatically finds the tools an application requires for a given task. This reduces prompt sizes, cuts inference costs, and makes agentic applications significantly easier to build and maintain at scale.

4. Significantly Fewer Tokens Used

GPT-5.4 uses “significantly fewer tokens” than GPT-5.2 to complete the same tasks. At $2.50 per million input tokens, combined with reduced token usage, GPT-5.4 for enterprise is meaningfully more cost-efficient for production workloads than its predecessor, despite being a more capable model.

5. Codex-Grade Coding Built In

GPT-5.4 incorporates the coding capabilities of GPT-5.3-Codex — OpenAI’s most capable agentic coding model — directly into the mainline model. A fast “Codex mode” delivers up to 1.5x speed gains, and an experimental Playwright Interactive feature allows Codex to visually debug web and Electron applications in real time.

Real Enterprise Use Cases

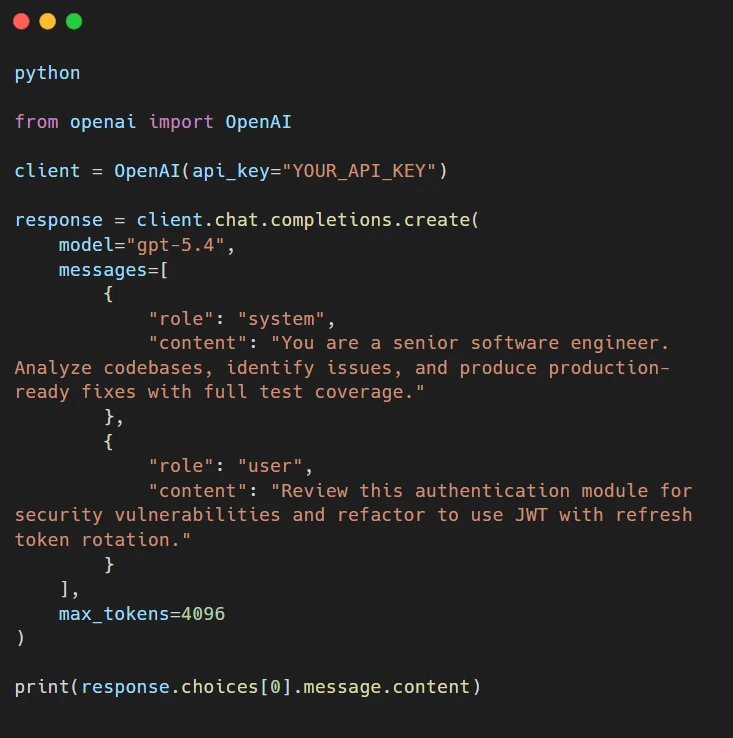

a. Software Engineering & Code Review

GPT-5.4 scores 57.7% on SWE-Bench Pro — a meaningful improvement over GPT-5.2 — and brings Codex-grade coding into a single model. For enterprise engineering teams, this means:

- Automated debugging of production code.

- Feature implementation across large codebases.

- End-to-end PR creation, testing, and review.

- Real-time visual debugging of web applications via Codex Playwright.

b. Financial Modeling & Analysis

GPT-5.4 achieves 87.3% on OpenAI’s internal spreadsheet modeling benchmark — an 8+ point improvement over GPT-5.2. For finance teams, this means building financial models, analyzing earnings reports, running scenario planning, and generating investment research — all at a level that OpenAI specifically compares to junior investment banking analyst output.

Mercor CEO Brendan Foody put it directly: GPT-5.4 “excels at creating long-horizon deliverables such as slide decks, financial models, and legal analysis.”

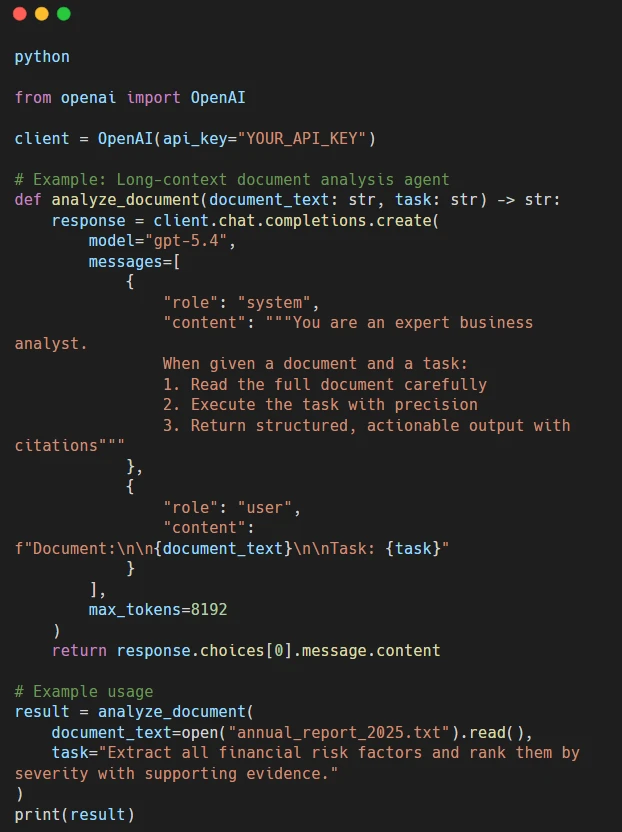

c. Document Intelligence & Legal Analysis

With a 1M token context window, GPT-5.4 can process entire legal case files, contract archives, and regulatory document sets without chunking. For legal teams, this means:

- Full contract analysis and clause extraction in one pass.

- Regulatory compliance review across entire policy libraries.

- Due diligence document summarization at scale.

- Side-by-side contract comparison across hundreds of pages.

d. Research & Competitive Intelligence

BrowseComp — which measures a model’s ability to search, select, and integrate web information — jumped from 65.8% (GPT-5.2) to 82.7% in GPT-5.4. For research-heavy roles, this is the most practically significant benchmark improvement in this release. Agents built on GPT-5.4 can now conduct thorough, multi-source research tasks autonomously and reliably — a capability that was brittle in earlier models.

e. Multi-Step Agentic Workflows

The Toolathlon benchmark (multi-step tool orchestration) improved from 46.3% to 54.6%. OpenAI’s example task: “an agent needs to read emails, extract assignment attachments, upload them, grade them, and record results in a spreadsheet.” GPT-5.4 handles this kind of complex, multi-application workflow with significantly better accuracy than any previous model.

Industries That Benefit Most Right Now

- Software & Tech Companies — Unified reasoning + Codex-grade coding in one model. Teams using GPT-5.4 in Codex can build, debug, test, and ship faster with fewer model switches and lower total cost per task.

- Finance & Investment Banking — The spreadsheet benchmark score, long-context financial document analysis, and BrowseComp research capabilities directly address the core workload of finance teams. Early enterprise adopters include BBVA, which used pre-release access for financial analysis.

- Legal & Professional Services — Full-document contract analysis, regulatory review, and research synthesis. The 1M context window removes the single biggest limitation for legal document workflows.

- Healthcare & Life Sciences — Clinical literature synthesis, regulatory submission analysis, patient data reporting, and long-document protocol review. The improved accuracy and lower hallucination rate matter critically in this domain.

- E-Commerce & Retail — Native computer-use agents can operate internal tools, update inventory systems, generate product descriptions, and run customer support workflows across multiple platforms simultaneously. Shopify is already listed as a GPT-5.4 enterprise partner.

- Media, Publishing & Research — The BrowseComp improvement (+17 points) directly benefits research-intensive publishing workflows, automated fact-checking pipelines, and competitive intelligence operations.

- Professional Services & Consulting — Long-horizon deliverable generation (reports, decks, models) is where GPT-5.4 specifically positions itself. Consulting teams can produce client-facing work faster with more consistent quality.

How to Implement GPT-5.4 for Enterprise

Option 1: ChatGPT (No Code)

GPT-5.4 Thinking is available now to ChatGPT Plus, Team, and Pro subscribers. GPT-5.4 Pro — the highest-performance variant — is available on Pro and Enterprise plans. Start here for workflow experimentation, document processing, and prompt engineering before committing to API integration.

Option 2: Direct API Integration

Model IDs: gpt-5.4 (latest alias) or gpt-5.4-2026-03-05 (pinned snapshot for reproducibility).

Option 3: Agentic Workflow with Tool Search

Pricing Note for Production Planning

| Usage Pattern | Cost Impact |

|---|---|

| Standard input (≤272K tokens) | $2.50 / 1M tokens |

| Cached input (repeated system prompts) | $0.25 / 1M tokens — 90% cheaper |

| Output tokens | $15.00 / 1M tokens |

| Very large context (>272K input tokens) | 2× input + 1.5× output pricing |

| Batch processing | Reduced rates available |

GPT-5.4 vs Claude Opus 4.6: Which Should You Use?

| Capability | GPT-5.4 | Claude Opus 4.6 |

|---|---|---|

| Context window | 1,050,000 tokens | 1,000,000 tokens (beta) |

| Input price | $2.50/1M | $5.00/1M |

| Output price | $15.00/1M | $25.00/1M |

| Computer use | Native (75% OSWorld) | Available |

| Coding (SWE-bench) | 57.7% | 80.8% |

| Knowledge work | Strong | #1 on GDPval-AA |

| Tool orchestration | 54.6% Toolathlon | Strong |

| Spreadsheet modeling | 87.3% | Lower |

GPT-5.4 vs Claude Opus 4.6: Which Should You Use?

The gap between enterprises actively building with GPT-5.4 and those still evaluating AI isn’t about features anymore. It’s about compound advantage.

Every week, a team uses GPT-5.4 for enterprise in production, they’re learning what prompts work, what workflows are worth automating, what the edge cases are, and how to build reliable agentic pipelines. That knowledge accumulates. Teams that start in March 2026 will be six months ahead of teams that start in September — not just in tools, but in institutional understanding of how to use them.

The computer-use capability alone changes what’s possible. An agent that can operate your internal software stack — opening Jira, updating Salesforce, generating a report in Excel — without requiring custom integration per tool is a different class of automation than what existed three months ago.

The question isn’t whether to use GPT-5.4 for enterprise. It’s which process to automate first?

Conclusion: The Window Is Open Right Now — Use It

GPT-5.4 for enterprise was launched two days ago. The benchmark improvements are real, the pricing is competitive, and the capabilities — especially native computer use, 1M token context, and Tool Search — open up automation categories that weren’t practical before.

The enterprises that move in the next 30 days gain a structural advantage over those that wait. Not because the tools will disappear, but because the learning compounds. Build the first workflow this week. Learn what breaks. Fix it. Build the next one.

The gap between enterprises using GPT-5.4 and those still evaluating AI just became a chasm. Which side are you on?

About Orbilon Technologies

At Orbilon Technologies, we build AI-powered web apps, mobile applications, SaaS platforms, and custom software solutions for startups and enterprises worldwide. Based in Lahore, Pakistan, with a US presence, our team brings hands-on, production-grade experience integrating enterprise AI models — including GPT-5.4, Claude Opus 4.6, and agentic pipelines — into real business workflows.

We’ve delivered AI solutions for clients across the US, UK, and beyond, holding a 4.96 rating on Clutch and GoodFirms. We don’t just follow AI releases — we build production systems with them the week they ship.

Website: orbilontech.com

Email: support@orbilontech.com

Ready to Build with GPT-5.4 for Enterprise?

Whether you’re integrating GPT-5.4 into your first workflow or designing a full multi-agent enterprise system, Orbilon Technologies can help you architect, build, and ship it fast — with measurable ROI from day one. Book a Free Consultation

Want to Hire Us?

Are you ready to turn your ideas into a reality? Hire Orbilon Technologies today and start working right away with qualified resources. We will take care of everything from design, development, security, quality assurance, and deployment. We are just a click away.